Agentic AI: Hype vs reality in enterprise adoption

What is actually real, what is breaking in production and what the future of agentic AI looks like for enterprises that plan to get it right.

Research drawn from: Gartner Hype Cycle for AI 2025, Deloitte Emerging Technology Trends 2025, McKinsey AI Survey late 2025, NIST AI Risk Management Framework 2025.

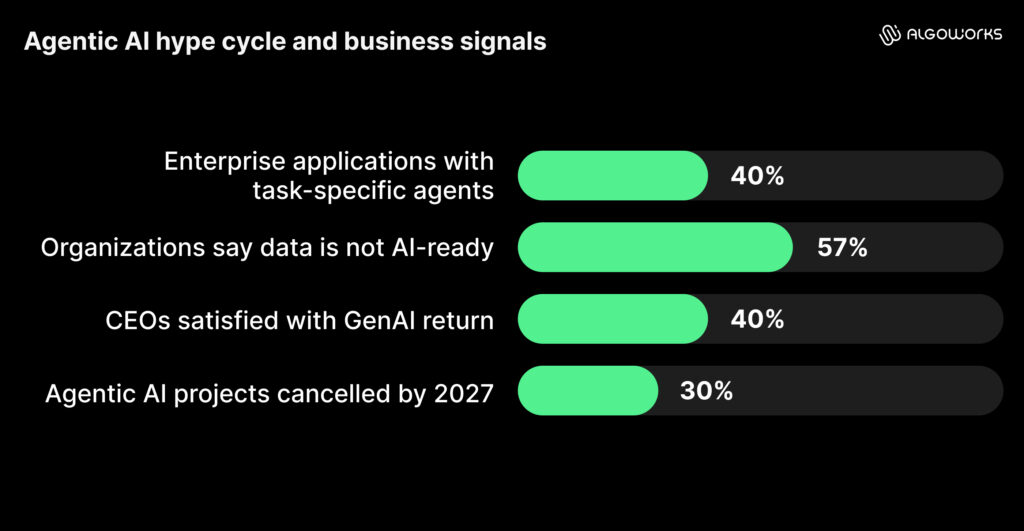

Most enterprises have been running agentic AI in some form since 2025, building on earlier momentum seen in AI across industries. The average organization manages 12 agents right now and Gartner expects 40% of enterprise applications to carry task-specific agents before this year ends. Adoption was not the hard part. What nobody planned for was the production reality that followed.

Agentic AI does something GenAI never did, especially in how agentic AI is being applied across software development workflows. It does not wait for a prompt; it plans, uses tools, executes tasks across multiple steps and acts without human intervention. That is a structural difference. Agentic AI acts on outputs rather than presenting them, which means every failure mode that made GenAI unreliable in production, hallucinations, inconsistent outputs, data quality gaps, now operates at higher cost and with greater consequence.

Analyst tension: Gartner maps agentic AI and GenAI as separate technologies on its Hype Cycle. Other researchers argue “agentic” simply extends the same LLM curve with expanded capability. Both positions hold weight and the architectural shift is real. The shared failure modes are equally real and enterprise leaders need to evaluate both.

Where does agentic AI stand right now in the hype cycle?

Gartner’s AI Hype Cycle placed GenAI in the Trough of Disillusionment, the phase where early excitement fades and leaders start questioning ROI, scalability and real business value. It also placed AI agents at the Peak of Inflated Expectations, where excitement is high, investments are accelerating and expectations are running ahead of what most organizations can reliably deliver. That puts the two technologies most enterprises are currently running at opposite ends of the maturity curve, despite one largely powering the other.

|

Technology |

Hype Cycle Position | Business Signal |

|

Generative AI |

Trough of Disillusionment | ROI scrutiny rising, recalibration underway |

| AI Agents | Peak of Inflated Expectations | High investment, low production deployment |

| AI-Ready Data | Peak of Inflated Expectations | 57% of organizations say data is not AI-ready |

| ModelOps / AI Engineering | Slope of Enlightenment | Infrastructure investments gaining traction |

| AI-Native Software Engineering | Innovation Trigger |

Augmentation in practice, not full autonomy |

The average company spent $1.9 million on GenAI projects in 2024. Less than 30% of CEOs were satisfied with the return. Gartner’s projection for agentic AI is equally direct: more than 40% of projects will face cancellation by end of 2027, not because the technology failed, but because organizations never built the foundations it requires.

Why is agentic AI adoption still so low despite heavy investment?

This is where AI hype vs reality shows up in the data. The gap between what organizations report about agentic AI and what they have shipped is wide.

|

Metric |

Number |

Source |

| Enterprises experimenting with agentic AI | 62% | McKinsey, late 2025 |

| Organizations with production-ready systems | 14% | Deloitte, 2025 |

| Organizations actively running agents in production | 11% | Deloitte, 2025 |

| Organizations with no formal agentic AI strategy | 35% | Deloitte, 2025 |

| AI initiatives abandoned in 2024 | 42% | S&P Global, 2024 |

| Enterprise AI pilots failing to reach production | 80%+ | RAND Corporation, 2025 |

62% of enterprises are experimenting with agentic AI. Only 11% have anything running in production. That distance is not a technology problem. It is a failure of execution, governance and sequencing, areas where structured AI services become critical.

Why do most agentic AI projects fail before they reach production?

The failures follow a consistent pattern, and organizations often realize where AI systems tend to break in real-world pipelines only after the budget is committed, not before.

Across the agentic AI projects we have worked on, the failure is rarely inside the model. It sits in the sequence. Teams build before they govern, automate before they validate and push to scale before they understand what breaks at the edges. When the surrounding system is not ready, the model cannot compensate for it.

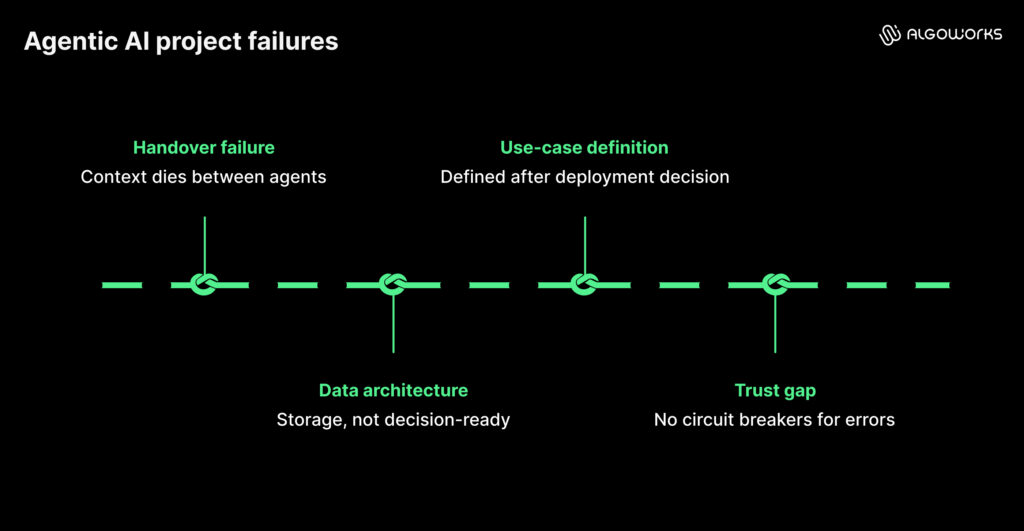

Handover failure: When context dies between agents

When agents work in sequence, context degrades between steps. The follow-on agent misclassifies or loses an issue the previous step correctly identified and the error compounds with every additional agent added to the chain.

Poor data architecture: Why is enterprise data not AI-ready?

Enterprise data was designed for ETL pipelines and retrieval, not to give an agent real-time business context. Agents wired into existing infrastructure without rearchitecting for agentic consumption either produce unreliable outputs or stall on ambiguous context.

- 48% of organizations cite searchability of data as a challenge to AI automation (Deloitte, 2025)

- 47% cite reusability of data as a challenge (Deloitte, 2025)

- 57% estimate their data is simply not AI-ready (Gartner, 2025)

Use cases defined after the decision to deploy

Board conversations demand AI momentum, so the use case gets defined after the deployment decision, not before. When the underlying process is poorly understood, an agent accelerates the dysfunction rather than fixing it.

The trust gap

When a chatbot gives a wrong answer, a person catches it. When an agent hits an error mid-workflow, it self-corrects by accessing more systems, making more decisions and compounding the original problem before anyone has visibility. Most deployments have not built the circuit breakers that stop this in time.

Analyst note: You cannot automate something you do not trust. Most agentic AI systems run on LLMs, which means the reliability concern is built in. If you want to automate something completely, you have to trust it completely first.

Challenges of managing agentic AI in production

The question of whether agentic AI delivers has been replaced by a harder operational one: managing what has already been deployed without a governance plan. Half of the agents already running operate in complete isolation, no shared context, no coordination, no central oversight. Teams built them function by function and the result is fragmentation nobody designed for. Practitioners call it agent sprawl. It behaves like shadow IT, except the systems are making autonomous decisions.

The risk underneath agent sprawl is not one agent failing. It is errors compounding silently across isolated agents before anyone catches them. The industry is converging on self-verification as the fix: feedback loops that let agents validate outputs before passing work downstream.

Gartner and Forrester identify multi-agent orchestration as the defining infrastructure priority of 2026, and how enterprises are already orchestrating AI agents across systems and workflows. The same role Kubernetes played for container management. Organizations still on single-model architectures are already finding that one provider outage breaks entire workflows. Multi-model routing is no longer an advanced architecture choice. It is the production baseline.

What does successful agentic AI deployment look like in practice?

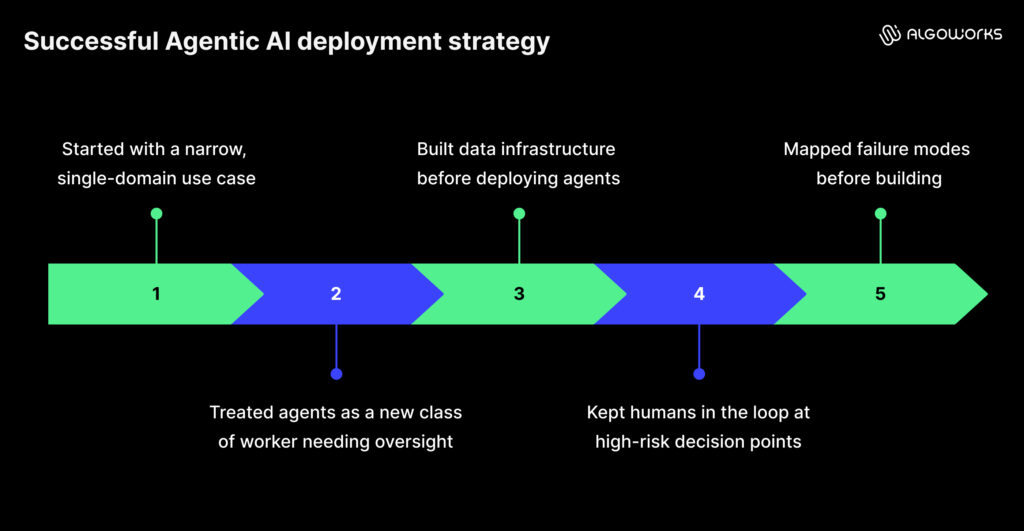

Among the 11 to 14% of organizations that have moved agentic AI into production, the differentiating factor is not vendor selection or budget size. It is the order in which they made decisions.

|

What the successful minority did |

What the majority did |

| Started with a narrow, single-domain use case | Built for enterprise-wide transformation from day one |

| Treated agents as a new class of worker needing oversight | Deployed agents as standard software releases |

| Built data infrastructure before deploying agents | Layered agents onto existing data architecture |

| Kept humans in the loop at high-risk decision points | Pursued full autonomy as the target state |

| Mapped failure modes before building | Discovered failure modes after launch |

The organizations producing results moved carefully, not quickly. They mapped where the system could break, built the supporting infrastructure first and validated one thing in production before expanding to the next.

Is your organization ready for agentic AI?

Before committing budget, these questions need honest answers from inside the organization, not from a vendor presentation.

- Is your data structured for agent consumption or simply stored somewhere accessible?

- Do you trust your current GenAI outputs enough to automate consequential action on top of them?

- Is this use case deterministic enough to automate or does it still require human judgment at critical points?

- When the agent is wrong, does a recovery path exist?

- Are you solving a clearly defined process problem or building something that stops at the demo stage?

Organizations furthest ahead in agentic AI adoption followed the same sequence: start with one well-scoped problem, get it to production, learn precisely where it breaks, then expand. Sequencing consistently outperformed speed.

What is the future of agentic AI beyond the hype?

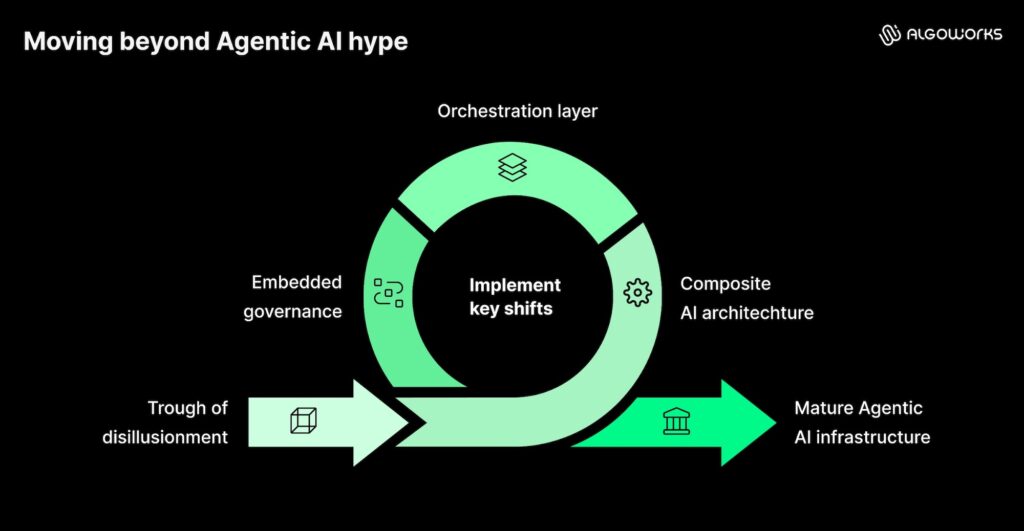

The Trough of Disillusionment reflects how agentic AI was sold and bought, not whether the technology works. Three shifts separate organizations moving past it from those still in it.

Composite AI is replacing single-agent architecture

Coordinated systems combining machine learning, traditional automation and agents are replacing the single-agent model, with each component handling what it does reliably. Gartner identifies composite AI on the Innovation Trigger as the architecture that will outperform multi-agent deployments in production.

Orchestration delivers more value than the agent itself

The orchestration layer, routing tasks to the right model at the right cost with the right oversight, is where production value concentrates in 2026. Agentic AI trends this year point toward coordination infrastructure as the differentiator, not individual agent capability.

Governance designed in scales faster than governance added later

NIST updated its AI Risk Management Framework in 2025 to include specific profiles for agentic AI, requiring agent tool access mapping and automated circuit breakers. Embedding governance into the workflow from the start lets organizations move faster and break less. Gartner projects A4I agents will participate in 15% of business decisions by 2028, with 85% still involving humans. At that maturity level, agentic AI is infrastructure, not an initiative.

What is actually real about agentic AI (and what is not)?

|

Common claim |

Honest assessment |

| Agentic AI automates end-to-end enterprise workflows today | Reliable in narrow, well-governed domains. Not valid as a general claim. |

| Multi-agent systems compound intelligence | They also compound hallucination risk. Governance infrastructure must come first. |

| Agentic AI is a genuinely new category | Architecturally distinct, yes. LLM-dependent with shared failure modes, also yes. |

| The trough signals the technology is failing | It signals that the technology is maturing past inflated expectations. |

| Organizations must move fast or fall behind | The organizations furthest ahead moved most carefully, not most quickly. |

Bottom line: Agentic AI is real. The production gap is also real. The organizations winning are not those with the largest budgets or most ambitious roadmaps. They are the ones who asked hard questions first, built infrastructure before deploying agents and treated the trough as a design constraint, not a temporary setback.

Agentic AI is not a race to deploy, it is a discipline to get right. The organizations that win will be the ones that build for reliability before scale. In the end, outcomes won’t depend on how fast you moved, but how well you built.

Sources: Gartner Hype Cycle for AI 2025, Gartner Predicts 2026, Deloitte Emerging Technology Trends 2025, Deloitte State of AI 2026, McKinsey AI Survey late 2025, McKinsey AI Trust Maturity Survey 2026, S&P Global 2024, RAND Corporation 2025, NIST AI Risk Management Framework 2025, Forrester 2026, HBR October 2025.

Agentic AI refers to systems that can plan, make decisions and execute multi-step tasks with minimal human input, rather than just responding to prompts.

Because most organizations are not ready at the system level. Data, governance and workflow clarity are usually missing when teams move from pilot to production.

Only in narrow, well-defined use cases. Most enterprise workflows still need human oversight and control points.

Errors compounding across workflows without visibility, especially when multiple agents operate without coordination.

Getting the sequence right: clear use case, prepared data, built-in governance, then controlled scaling.